-

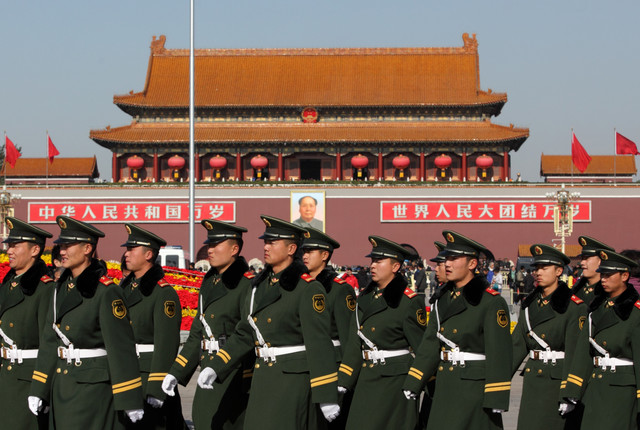

Neutering Volt Typhoon to Deter China

The latest edition of the Seriously Risky Business cybersecurity newsletter, now on Lawfare. -

First Amendment Limits on AI Liability

AI-generated content likely enjoys broad First Amendment protection, but remains subject to defamation laws and other established speech restrictions. -

Lawfare Daily: Geoff Schaefer and Alyssa Lefaivre Škopac on AI Adoption Best Practices

How can AI be adopted ethically and responsibly? -

Negligence Liability for AI Developers

Shifting the focus of AI liability from the systems to the builders. -

Governing Robophobia

Human bias against robots could negatively impact AI policy. -

Products Liability for Artificial Intelligence

How products liability law can adapt to address emerging risks in artificial intelligence. -

Lawfare Daily: Itsiq Benizri on the Regulatory and Political Implications of Thierry Breton’s Resignation from the EU Commission

Why did Breton resign from the EU Commission? -

Regulatory Approaches to AI Liability

Federal agencies wield crucial tools for regulating AI liability but face substantial challenges in effectively overseeing this rapidly evolving technology. -

AI Liability for Intellectual Property Harms

AI-generated content sparks copyright battles, leaving courts to untangle thorny intellectual property issues. -

Could AI Lead to the Escalation of Conflict? PRC Scholars Think So

Chinese defense experts worry that AI will make it more difficult for Beijing to control and benefit from military crises. -

Rational Security: The “Ms. Jackson, if You’re Nastya” Edition

This week, Scott Anderson and Alan Rozenshtein sat down with Anastasiia Lapatina and Tyler McBrien. -

Laying the Legal Foundation for Civilian Cyber Corps

Cyber volunteers are defending the U.S. against rising cyber threats—the law can help or hinder their effectiveness.

The upcoming main navigation can be gotten through utilizing the tab key. Any buttons that open a sub navigation can be triggered by the space or enter key.

.png?sfvrsn=c569e511_5)

.png?sfvrsn=496dd94e_5)